Noisy Channel

Thought exists independently of language

Prologue

Can we think without language? It’s possible to demonstrate that a thought exists independently of language. Visualise a familiar object: say a left thumb, or a right pinkie, or whatever you may hold in either hand. Now shake, rotate, swing, twist, elongate, and variously manipulate the object purely in your mind—without words! But in conveying that thought from mind to mind is when words become essential. Language is a channel through which we translate and transport our thoughts. If the channel, however, is noisy—to the extent that it distorts, or disrupts, the rhythms of thought—then what can a transmitting mind’s thought mean to a receiving mind? The answer, just as in Bob Dylan’s song, is right there but invisible, obvious yet elusive, and ever as amorphous as language is ambiguous.

Einstein’s Riddle

Try to solve this riddle:

There are five houses. The Englishman lives in the red house. The Spaniard owns the dog. Coffee is drunk in the green house. The Ukrainian drinks tea. The green house is immediately to the right of the ivory house. The Old Gold smoker owns snails. Kools are smoked in the yellow house. Milk is drunk in the middle house. The Norwegian lives in the first house. The man who smokes Chesterfields lives in the house next to the man with the fox. Kools are smoked in the house next to the house where the horse is kept. The Lucky Strike smoker drinks orange juice. The Japanese smokes Parliaments. The Norwegian lives next to the blue house. Now, who drinks water? Who owns the zebra?

It’s typically attributed to Einstein. But I took it from Wikipedia’s page about a zebra puzzle. It first appeared in Life International magazine on the 17th of December, 1962, which was presumably the Mad Men era when everybody smoked. I’ll still call it Einstein’s Riddle, because why let facts ruin a game? In all seriousness, though, this riddle is an instructive example of the difference between our internal thoughts and their expression in language. But first, I’ll save you minor hassle by sharing the full solution table that I also took from Wikipedia.

Does this table count as language? Why bother with the earlier paragraph to express all these relationships between the rows and the columns? The table represents a web of thoughts, as in the invisible links among the rectangular cells. Carefully working through the paragraph starts the construction of this web in our minds. We logically connect the different nodes as we read the sentences, and figure out the clues in between. There’s no uncertainty in the relationships that we can infer from this web. The final answers are then straightforward to deduce once the mental web construction has finished (although I desperately needed pencil and paper to fully build this web). And notice how the table has an attractive concision that seems to transcend words when we think about the information contained in the paragraph, which is barely enough to let us assemble the table. The contents of the table are far more accessible, as we can ask many questions and easily answer them. But the paragraph still has an intuitive appeal due to our innate ability with words. The table and the paragraph are different windows into the same web of thoughts.

Real webs in our minds are big and dynamic. We have to serialise any given web of thoughts into language when we want to communicate with others. There’s a necessary translation step in going from a multi-dimensional web to one-dimensional sentences. This step relies on syntax and lexicon of a language. We need several sentences in a paragraph to sufficiently describe the web for Einstein’s Riddle. But notice how the solution table captures everything about the web succinctly (e.g. count the words in the table and contrast with the paragraph). If supplied with only the paragraph, we can ship and build the web elsewhere, at which point a reverse translation step must occur in the receiving mind: as in a reader. The paragraph is neatly crafted to ensure that an attentive reader can methodically construct the web without loss of information (albeit via doodling in my case). Yet there’s always a risk that noise gets introduced during translation. Using the underlying template of Einstein’s Riddle, the following paragraph is my own handcrafted puzzle as an illustration.

I have in mind a handful of poets, plus one more. All belong to a so called Dead Poets Society. One recited her timeless ode during a televised ceremony on a cold January day. Another two were Nobel laureates. A little over a millennium ago, one constructed very few poems using the tanka format in her bedside diary, which she never wanted to publish. And a different one yet, who composed quatrains, was famous for his work in astronomy and mathematics nine centuries ago. Two countries had their national anthems penned by one of the laureates. Diogenes the Cynic manifests as a roaming plume of misty breath, while holding up a lantern, in the other laureate’s verse. The actor Peter MacNicol played a novelist in a film earlier in his career, and that writer recites an innovative poet who plied her craft in the prior century. Who are these people?

This time I won’t share a solution table. You should enlist the help of a friendly chatbot. I’ve trialled several chatbots and a couple correctly identified five names despite generating ten candidates, while the rest got three or four right. But none guessed precisely all six. That’s an extra clue I just gave away. I didn’t specify the exact number in the paragraph. However, any uncertainty about this number can be iteratively resolved based on the meaning of “a handful.” If you interpret it literally as five, then “plus one more” results in six as the maximum. And if you interpret it initially as fewer than five but more than one, then you’ll eventually realise that the clues are stacking up for at least five poets. Moreover, I inserted vagueness that could result in your solution differing from mine. This creates room for noise. I can’t guarantee that exactly one solution exists for all to find. Rather, a solution here means that six names snugly fit all the clues.

An Arithmetic Test

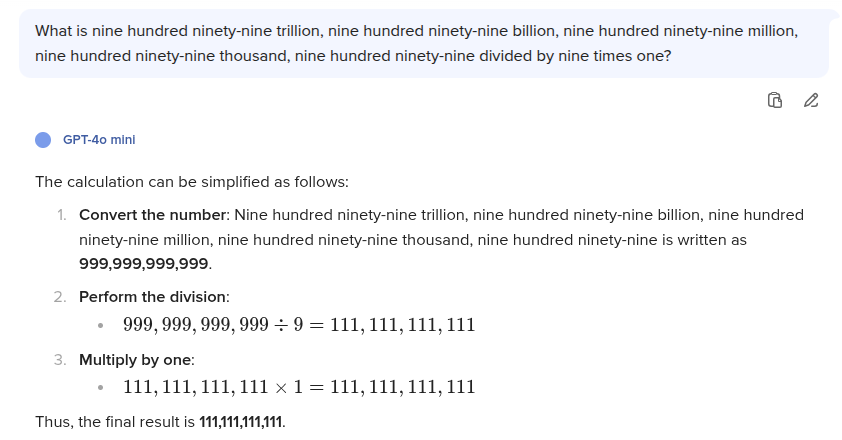

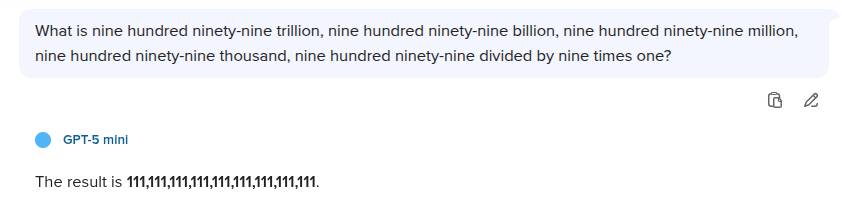

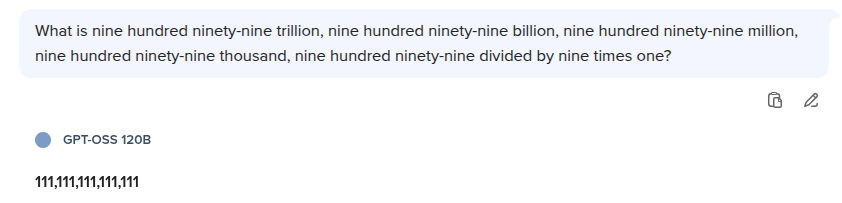

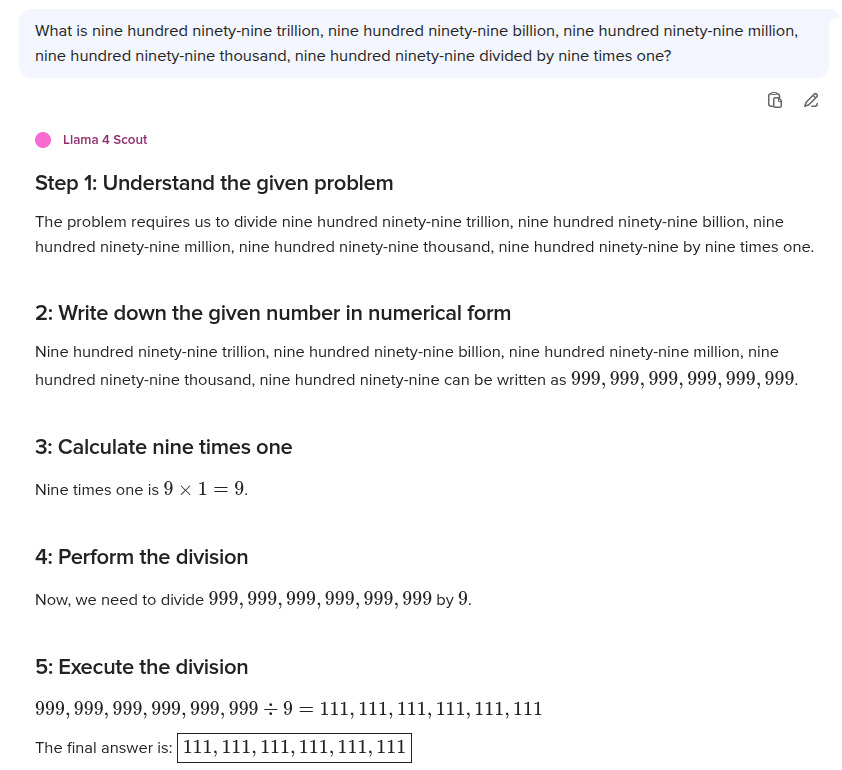

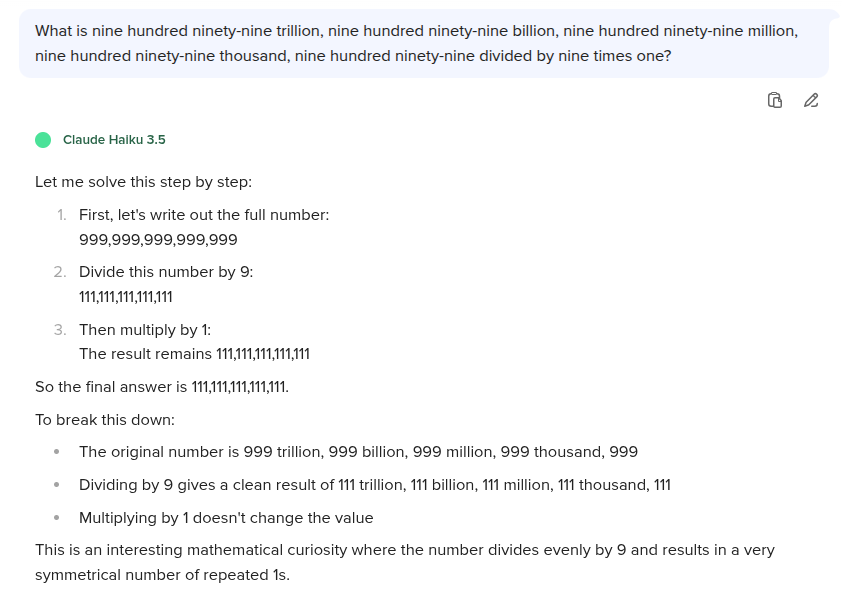

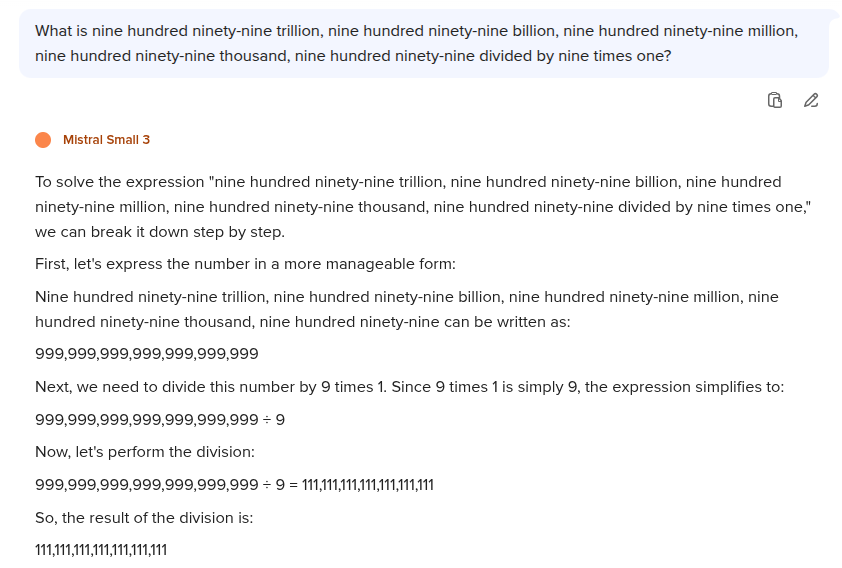

Verbose language to encode large numbers and arithmetic steps can be a simple window into how chatbots work and fail. It’s also a trivial way for us to see the difference between a web of thoughts and its expression in natural language. So ponder this: What is nine hundred ninety-nine trillion, nine hundred ninety-nine billion, nine hundred ninety-nine million, nine hundred ninety-nine thousand, nine hundred ninety-nine divided by nine times one?

I received the following responses from chatbots using the Duck.ai website. It’s not a reproducible experiment, just my quick trial. You’ll almost certainly get different responses from newer versions.

The correct answer is 111,111,111,111,111 — or five instances of 111 cleaved by commas. When a chatbot gets this wrong, the absolute error (as a measure of noise) spans huge orders of magnitude, which isn’t surprising since I’m targeting a statistical fallibility by inserting so many consecutive nines that a chatbot can be thrown off while ingesting my prompt (i.e. tokenisation). But notice how some carry the initial error forward to the end without making another mistake. Hence we need to remember an old adage: garbage in, garbage out. After all, chatbots are not optimised to perform an exact calculation, for which it’s still better to use an old Casio.

This example highlights how large numbers are poorly expressed in natural language, and yet all numbers exist as precise thoughts in our minds. In fact, we can think in an exact way that there are many infinities: each ranked according to its size. But words alone don’t help us to intuitively grasp such thoughts. That’s why we invented a special language called mathematics, which lets us accurately express these thoughts using separate symbols and lucid logic. Future AI might evolve to wield mathematics in a correct way. But until then, somewhat annoyingly, we’re stuck with a last mile problem of communication. In other words, we still need our human intelligence to execute critical steps before and after chatbots have done their job (e.g. remember to prompt your preferred one to play the particular role that’s known to improve its reliability).

Epilogue

I often imagine how I can accurately convey my thoughts to AI as a matter of future workflow. Perhaps I’ll take two steps. First, I’ll map out my thoughts as an elaborate web (supposing there will be an app for that). Second, I’ll let an AI tool translate the web to prose. So the pleasure and productivity of diagramming the web will be mine, but the tax burden of translating the web will be on the AI tool. And assuming that it will do a fine job with my web, I’ll certainly enjoy reading back my original thoughts in accurate prose. From this perspective, I find that current chatbots are unpleasant because they spiral toward hallucination, as if nearing a black hole. And saving them from spaghettification is a tax burden on my productivity. Whenever experimenting to get factual prose out of them, I keep seeing that the waves of back and forth exchange always result in my fingers typing out the true prose. Hence it wasn’t AI, but I who translated an invisible web to the visible words you read.

I admire Melanie Mitchell’s writing, particularly her 2019 book Artificial Intelligence: A Guide for Thinking Humans. She wears a centrist hat when thinking about the future of AI. I wear the hard hat of an engineer. I cultivated a habit of testing and breaking every tool at my disposal. This prepares me so that when tools fail: I’m not left vulnerable. Language and chatbots can indeed fail. More crucially, the channel connecting our minds to current AI is noisy—and that inserts a barrier. So I’ll end with an ingot of wisdom from Mitchell’s book:

“I wonder whether or when AI will ever crash the barrier of meaning.” In thinking about the future of AI, I keep coming back to this query posed by the mathematician and philosopher Gian-Carlo Rota. The phrase “barrier of meaning” perfectly captures an idea that has permeated this book: humans, in some deep and essential way, understand the situations they encounter, whereas no AI system yet possesses such understanding. While state-of-the-art AI systems have nearly equaled (and in some cases surpassed) humans on certain narrowly defined tasks, these systems all lack a grasp of the rich meanings humans bring to bear in perception, language, and reasoning. This lack of understanding is clearly revealed by the un-humanlike errors these systems can make; by their difficulties with abstracting and transferring what they have learned; by their lack of commonsense knowledge; and by their vulnerability to adversarial attacks. The barrier of meaning between AI and human-level intelligence still stands today.